The Snapshot

Migrate the entire infrastructure to AWS.

Provision infrastructure on demand and especially the availability of GPUs on different continents.

Ensure the scalability and stability of our infrastructure.

The Challenge

In order to support its growth and accelerate the production of models and their availability, LightOn has chosen to migrate to the AWS Cloud , and in particular to rely on Amazon Sagemaker. The Devoteam Revolve teams supported the company in this project, with the objectives of:

- The creation of Landing Zone using Terraform, and in compliance with AWS best practices.

- Migrating and optimising resources from another hyperscaler to AWS.

- Automation of the process of creating Machine Learning models, and making them available on the AWS Marketplace.

The Solution

In this interview, Lilian Debaque, Cloud Architect at LightOn , talks with us about the completion of this project alongside the Devoteam Revolve teams.

In what context did you choose to migrate to the AWS Cloud?

Our main need was to be able to provision infrastructure on demand , and especially the availability of GPUs on different continents. The migration therefore aimed to ensure the scalability and stability of our infrastructure, in a context of using particularly powerful GPUs. However, our solution remains agnostic, and Paradigm can be deployed on any provider, or on premises.

What was the scope of the migration?

In order to rationalise the infrastructure part and the application part, we chose to migrate the entire infrastructure to AWS . This choice allows us in particular to facilitate the interconnection between Amazon Sagemaker for the models, and the infrastructure for the data, Paradigm, etc.

What skills did you request from Devoteam Revolve?

The objective of this support was to enable us to accelerate the move to Cloud AWS . We were looking for technical counterparts to carry out work that we could not do due to lack of time, and we found experts capable of carrying out the project, without us needing to train them, manage them or closely monitor progress.

We therefore sought the expertise of Devoteam Revolve in the creation of Landing Zone based on infra as code and AWS best practices, on the migration and optimisation of resources from another hyperscaler to AWS, and on the automation of the process creation of Machine Learning models and their distribution on the AWS Marketplace.

Regarding the Landing Zone, we needed a slightly more specific approach, which was adapted to our particular needs, while respecting AWS best practices, and Devoteam Revolve was able to demonstrate adaptability to meet this request.

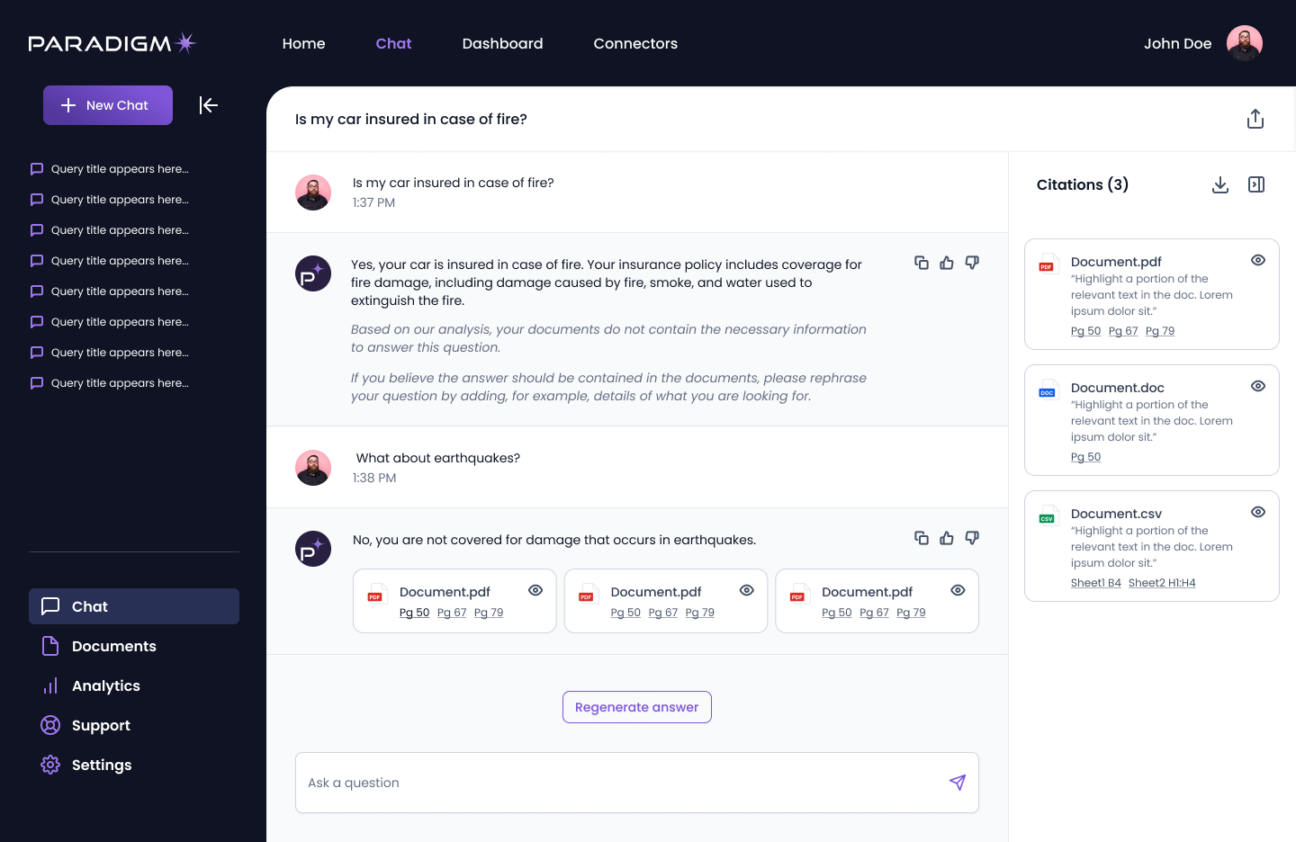

Paradigm platform interface

How did this support go?

The framing of the project was an essential phase, which saved us a lot of time in addition to giving us the stages of the migration.

I also really appreciated the speed in which the project was completed , the level of expertise on AWS, and the fluidity of our exchanges. Also, a lot of curiosity about the technical challenges that we were able to raise: for example, the possibility of adding billing management via AWS alarms, and blocking consumption beyond a defined budget. In the end, we didn’t implement this feature, but the thought process was rewarding.

Devoteam Revolve also completely migrated our Gitlab from OVH to AWS , always with the aim of rationalizing our infrastructure, and switching everything to SSO. This migration was also carried out quickly, as announced in the framework made by Devoteam Revolve. The project was well prepared, and therefore delivered on time.

What was the added value brought by Devoteam Revolve?

For us, this is a huge time saver . The work provided on this project was of high quality, with great expertise both on migration management and on the part related to the Marketplace. And we now have an infrastructure deployed with Terraform, and ready to use.

And what about the part related to Amazon Sagemaker?

Devoteam Revolve helped us automate the production of our models and their availability on the AWS marketplace. For us, the advantage of Sagemaker is to be able to manage several models simultaneously without needing to make changes to our infrastructure.

From our Paradigm application, we just have to choose a model from a list to process generative AI, depending on our versions. We no longer need to have a specific model for each environment. Previously, for cost reasons, we did not use the same models in dev or production environments.

What is your assessment of using Sagemaker today?

We already used Sagemaker before, but the automation implemented allowed us to streamline and accelerate our processes , as well as prepare for the arrival of the rest of the infrastructure on AWS. All this makes it easier to make our new models available on the marketplace.

Furthermore, Sagemaker is a SAAS tool that speaks to both developers and researchers here, and this facilitates the use and production of models for these two types of audiences.

What are the next steps ?

We are now working on three areas: improving the models, the application, and creating the infrastructure. We now have the advantage of being able to test models more quickly , adapt the application and upgrade versions while testing old models. And this, thanks to the work of Devoteam Revolve on EKS, Gitlab and Sagemaker.

To conclude on this project, everything went very smoothly, and the level of skills of the Devoteam Revolve teams very quickly gave us confidence. On very technical subjects, such as those linked to the marketplace, LLMs or EKS, we always fear having to then correct the service provider’s work, but that was not the case in this project: for us, the gain of time was enormous.

The Better Change

Migrating and optimising resources from another hyperscaler to AWS.

Automation of the process creation of Machine Learning models and their distribution on the AWS Marketplace.

High quality with great expertise both on migration management and on the part related to the Marketplace.